Controlled Video Generation

ControlNet

Originally developed for Stable Diffusion, ControlNet is a fine-tuning architecture for generating images by passing both a text prompt and an additional control signal. By passing both the text prompt of "a man in a suit" and an additional image containing a basic pose, we get much more fine-grained control over certain aspects of the image:

Under the hood, the ControlNet architecture was simple. For one or more blocks in the neutral network, a trainable copy is created. The copied block receives the control signal as input, and generates an output that is merged with the output from the original block. By freezing the parameters of the original block, and fine-tuning only the parameters in the clone/control block, the network learns the alignment between the control signal and the output.

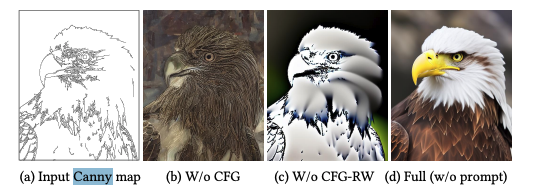

The control signal itself may vary; the image above (taken from the ControlNet paper) shows the use of a 2D image of joint poses in OpenPose format. Canny edge maps are also often useful as control signals:

"Canny" is shorthand for the edge detection algorithm developed by John F. Canny, and "Canny edge maps" are the grayscale image outputs from this algorithm that highlight the edges of objects in the input image in white. These are very cheap to compute, so Canny datasets are easy to generate from existing image datasets, and the signal itself is strong, clearly demaracting the overall shape of the object.

IC-LoRA

ControlNets have also been trained for various open source video generation models, including Alibaba's Wan2.2, Z.AI's CogVideoX.

For example, we can render a video as a depth pass in Mixreel and pass the depth output video as the control conditior for the video with a text prompt:

A confident woman strides toward the camera down a sun-drenched, empty street. Her vibrant summer dress, a flowing emerald green...

A more recent approach to structural control for video generation is In-context LoRA (aka IC-LorA).

Whereas ControlNet trains on a separate control signal, IC-LoRA is much simpler; concatenate a group of related images/prompts into a single image/prompt, mask one of the sub-images, then fine-tune a low-rank adapter to inpaint the masked portion.

The same can be applied to video; for example, LTX Video pose ICLoRA concatenates a reference video (the pose video) with a target video, and learns to reconstruct the (noised) target video from the reference and the textual prompt:

In-Context Learning

IC-LoRA is still a post-training process. It's still gluing an adapter to an existing pretrained model.

However, the shift towards in-context learning may be part of a trend that we've also seen in text and image-generation models, where the models are trained ab initio specifically to replicate the style of the inputs they are given.

In the context of generating videos, this is now referred to as "Reference-guided generation" or "Reference-to-video". The model is trained to reconstruct the output from multiple related inputs. For example, rather than training the model to generate video from a text description alone, the text could be paired with multiple headshots of each actor in the scene.

Runway, LumaLabs and ByteDance all seem to be heading in that direction. Admittedly, none of these companies are publishing sufficient technical details to know for sure. Let's hope for further clarity as 2026 progresses.